Merge pull request #27 from ipfs/chores/make-docs

docs(architecture): flesh out architecture docs

Showing

docs/architecture.md

0 → 100644

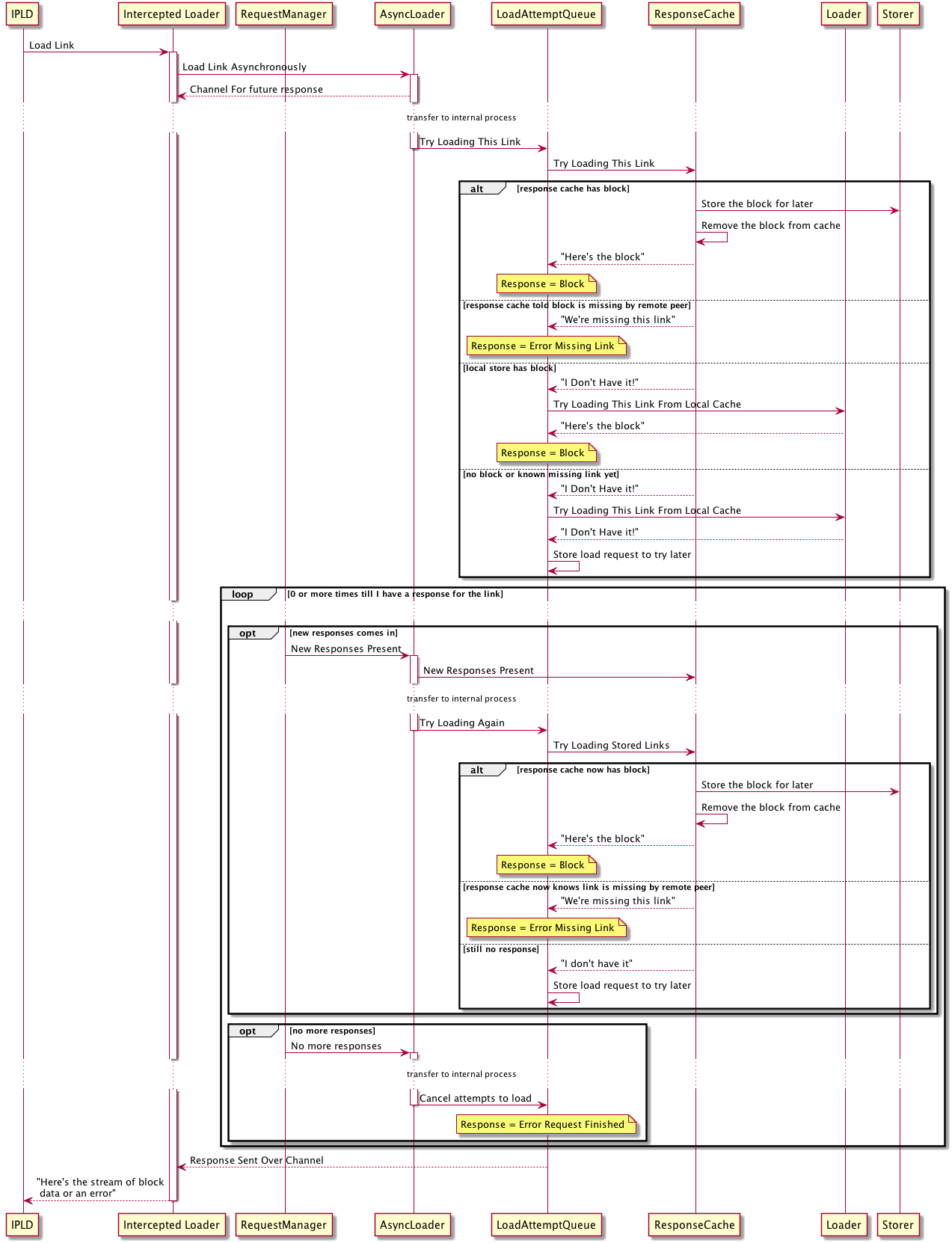

docs/async-loading.png

0 → 100644

117 KB

docs/async-loading.puml

0 → 100644

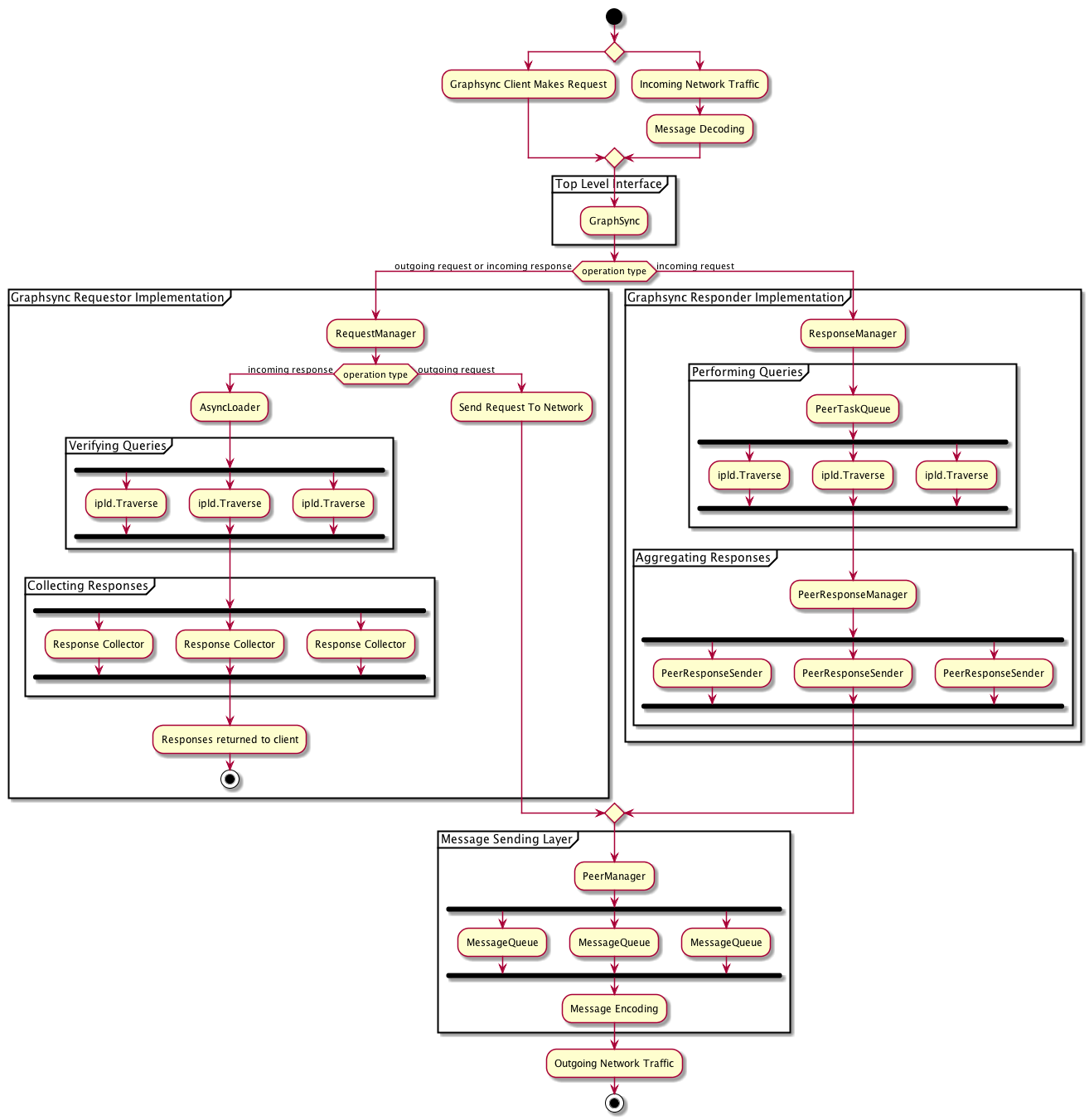

docs/processes.png

0 → 100644

95.3 KB

docs/processes.puml

0 → 100644

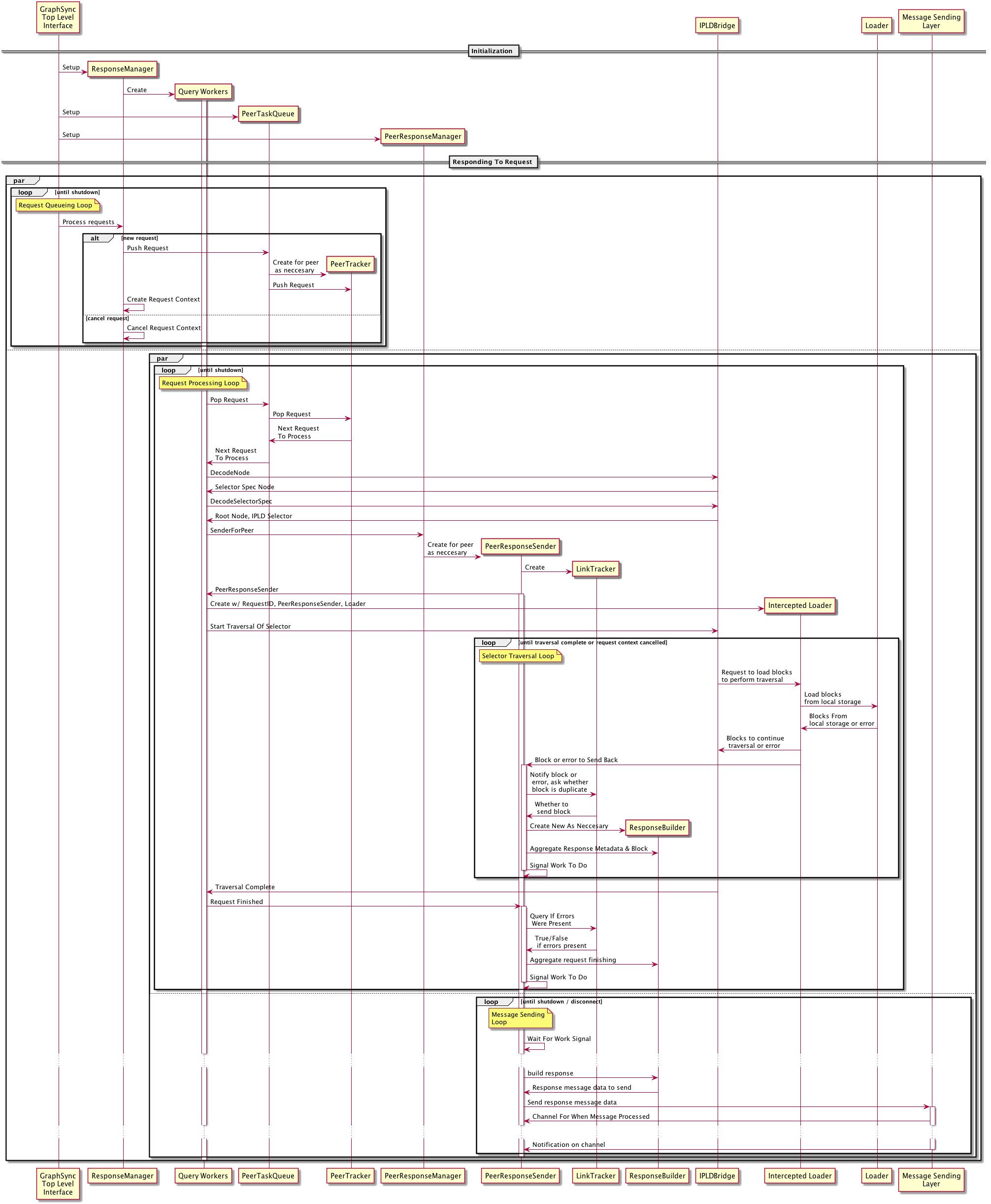

docs/responder-sequence.png

0 → 100644

212 KB

docs/responder-sequence.puml

0 → 100644

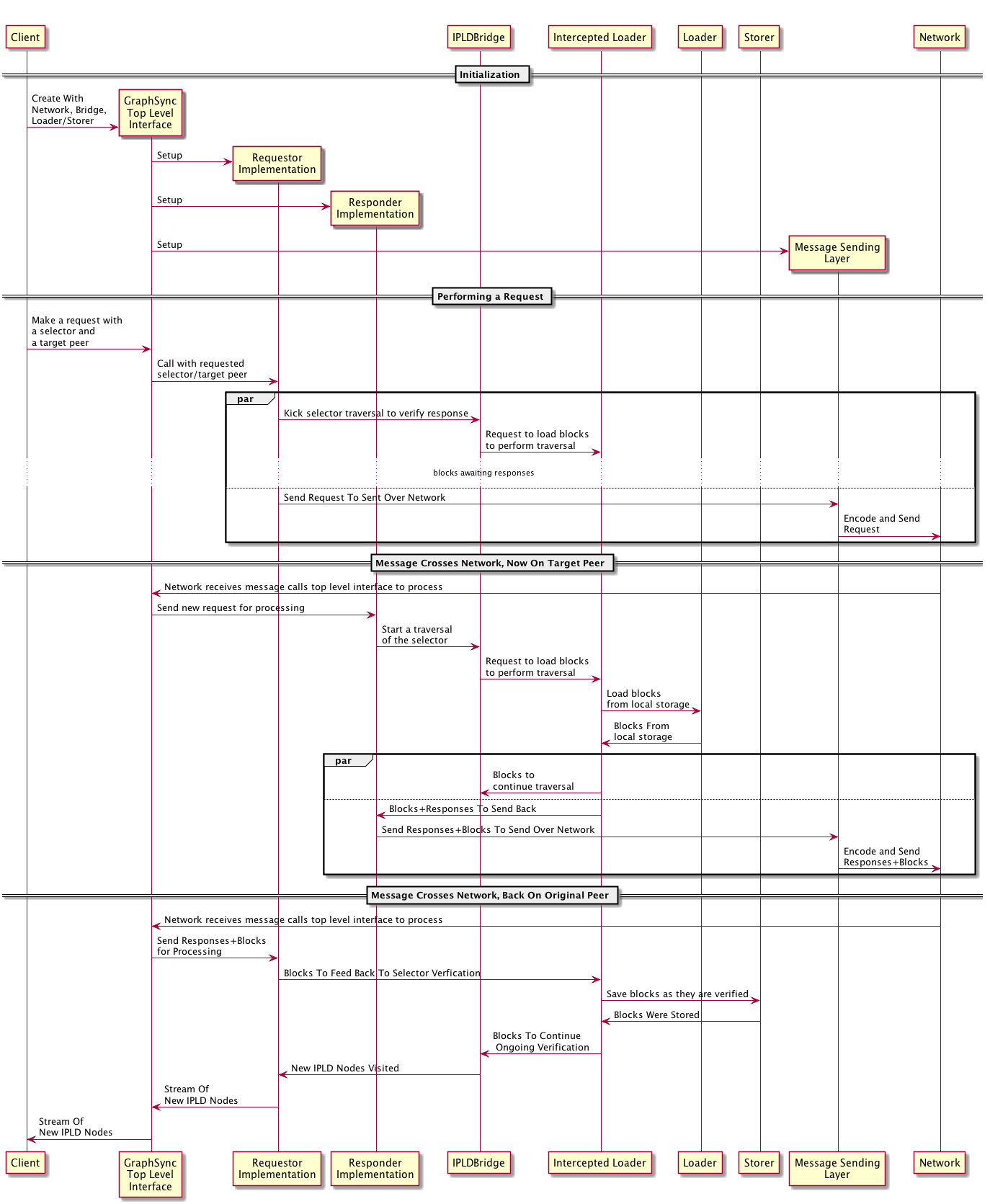

docs/top-level-sequence.png

0 → 100644

129 KB

docs/top-level-sequence.puml

0 → 100644